Architecture Design Specification The Agent Skill Framework for Dynamic Intelligent Systems

📎 附件资料

- 在线预览(幻灯片模式):点击打开

- 下载:/assets/the_agent_evolution.pdf

🖥️ 幻灯片预览(支持全屏)

1. The Paradigm Shift: From Static Prompts to Encapsulated Skills

The current trajectory of Large Language Model (LLM) integration has reached a critical inflection point: the transition from “conversational companions” to an “autonomous workforce.” This strategic shift necessitates a move away from fragile prompt engineering toward a decoupled, “Skill-based” architecture. In this paradigm, intelligence is no longer a monolithic block of text but a library of encapsulated, dynamic capability units. This modularity allows for “Progressive Disclosure”—the architectural logic where complex capabilities are revealed and loaded only when relevant—minimizing context noise and maximizing execution precision.

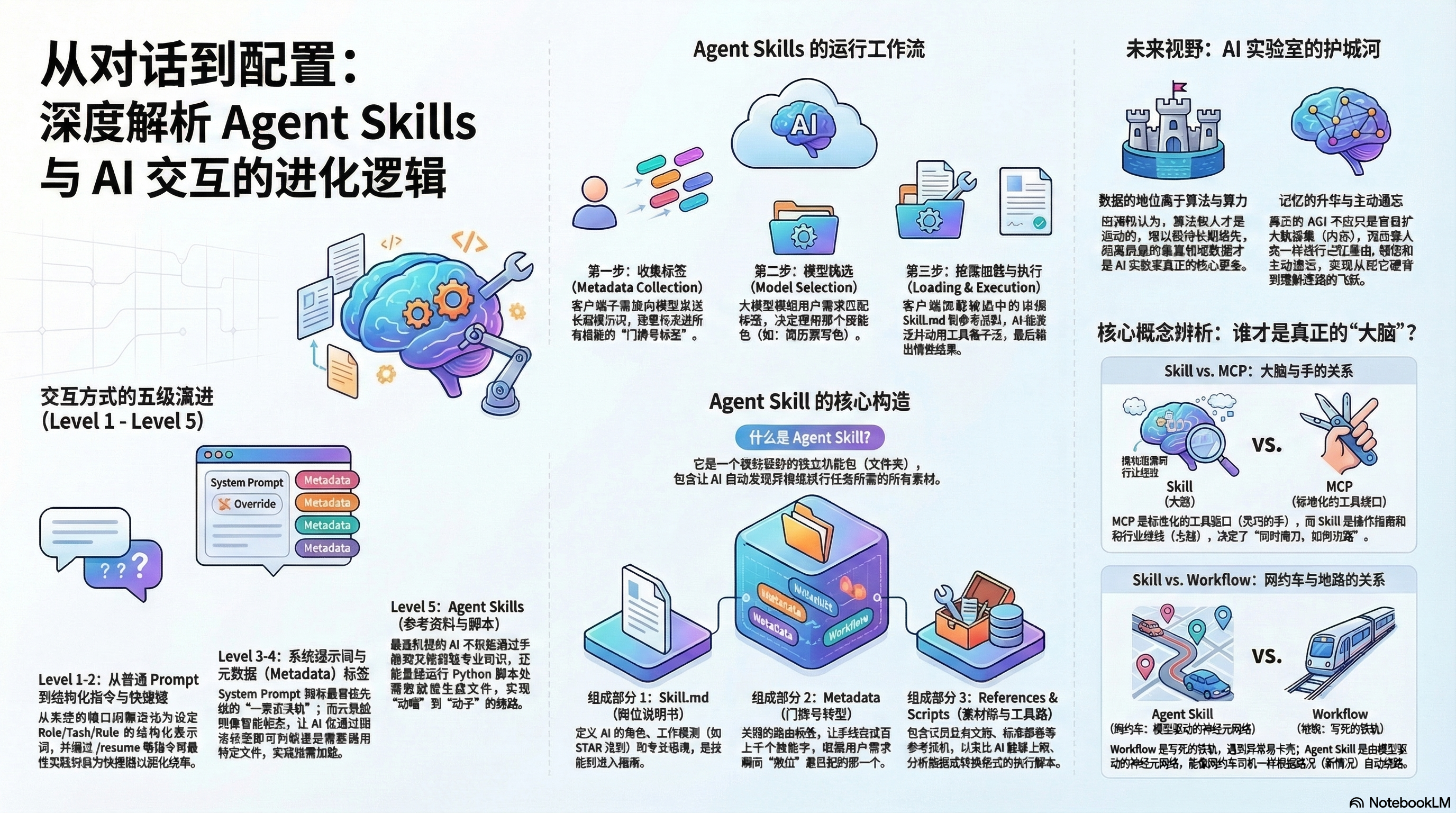

The evolution of AI interaction reflects a systematic drive toward higher state management and context isolation, categorized into five levels:

Evolutionary Taxonomy of AI Interaction (L1–L5)

| Level | Mechanism | Technical Implementation | Impact on Result Control |

|---|---|---|---|

| L1 | Structured Prompt | Role, Task, Constraints | Transition from “Chatting” to Constraint-based Generation. |

| L2 | Shortcut Command | Trigger-based logic (e.g., /resume) |

Freezes best practices; encapsulates complex rules into single-token triggers. |

| L3 | System Metadata | system.md / cursorrules |

Establishes top-level priority; prevents Context Drift across long sessions. |

| L4 | Routing Tags | Metadata Tagging | Enables “On-Demand Loading” (Lazy Loading) to resolve token overflow. |

| L5 | Agent Skills | Progressive Disclosure | Full capability encapsulation; dynamic retrieval of instructions, data, and tools. |

Modular Agent Skills allow for system-level maintenance without altering the core inference engine or monolithic application code. This architecture treats capabilities as “plugins” to the agent’s latent space, facilitating the transition from Level 1 to Level 5 autonomy.

2. The Four Pillars of Skill Composition

A standardized skill structure is mandatory for reliable model discovery and to mitigate “hallucinations of invention.” By providing a rigorous schema, we ground the agent’s behavior in specific domain experience rather than general-purpose probability.

2.1 Instructional Guidelines (skill.md)

This file defines the Experience (the “Brain”). It acts as the specific “Job Description,” utilizing frameworks like the STAR principle (Situation, Task, Action, Result). It dictates the cognitive logic, professional tone, and specific behavioral constraints the agent must adopt for a localized task.

2.2 Routing Metadata (Tags)

The “Door Plate” of the skill package. This lightweight metadata allows the system to perform discovery without loading the full instruction set into the context window. It facilitates efficient identification within the latent space, ensuring the agent only “activates” the skill when the user intent matches the tag.

2.3 Reference Materials (Data)

The “Reference Library” serves as a grounding mechanism. To prevent invention, the agent “consults and cites” internal business docs, templates, or manuals. This is the equivalent of “flipping through the manual” before answering, ensuring high-fidelity outputs aligned with organizational truth.

2.4 Execution Scripts (Tools)

The “Toolbox” represents the Interface (the “Hand”). These Python scripts or APIs facilitate the transition from Cognition to Action. Whether generating a formatted PDF, performing data analysis, or executing a search, these scripts move the agent from latent reasoning to deterministic system output.

[!NOTE] Architectural Composition Formula Skill = Domain Experience (skill.md) + Discovery Metadata (Tags) + Grounding Materials (Data) + Execution Interface (Scripts)

3. Operational Lifecycle: The 5-Step Discovery and Execution Flow

To achieve cost-efficiency and performance, the framework employs “Lazy Loading.” This ensures the context window is only populated with task-specific data, preserving the model’s limited attention resources.

The Standard Execution Workflow

- User Input Analysis: The client (e.g.,

claworcbot) intercepts the raw request. - Metadata Collection: The system aggregates only the door-plate tags from the skill library, ignoring the heavy instructional payloads.

- Model Selection: The LLM evaluates the lightweight tags to identify the specific skill package required for the task.

- Skill Activation: Once selected, the system pulls the full

skill.mdand reference materials into the active context window. - Task Execution: The agent executes the relevant scripts/tools, grounding the output in the reference data to produce the final result.

Implementation Scopes

- Personal Skills (

**~/.cloud/skills**): Stored in the user’s home directory. These follow the developer across projects, encapsulating personal coding styles, commit message formats, and documentation preferences. - Project Skills (

**.claw/skills**): Stored in the project root. These ensure team-wide standardization for brand guidelines, coding standards, and project-specific automation.

Note: While *claw.md* files (global instructions) are loaded into every conversation to maintain session-wide state, *skill.md* files are loaded strictly on-demand.

4. Comparative Analysis: Skills vs. Workflows vs. MCP

Navigating the “Agentic Era” requires a clear distinction between these three architectural patterns to avoid complexity debt.

Agent Skills vs. Traditional Workflows: “Ride-Hailing vs. Railway”

Traditional Workflows are like railways; they follow fixed, deterministic tracks (Step A -> Step B). They are inherently brittle and crash when encountering “unknown exceptions.” Agent Skills represent a “Ride-Hailing” model. The agent is goal-oriented; it knows the destination and uses its intelligence to dynamically re-route around obstacles or “traffic” (unforeseen errors), providing a resilient execution path.

Agent Skills vs. Model Context Protocol (MCP): “Brain vs. Hand”

MCP provides a standardized Interface (the “Hand”). It allows the model to connect to local files or databases. However, a hand without experience is useless. The Agent Skill provides the Domain Experience and judgment (the “Brain”), instructing the “hand” on when and how to use the tools effectively.

Architectural Logic Comparison

| Category | Logic Type | Flexibility | Ideal Use Case |

|---|---|---|---|

| Traditional Workflow | Determinative / Linear | Low | Repetitive, predictable data pipelines. |

| MCP | Interface-driven (Standardized) | Moderate | Standardized resource access (Databases/Files). |

| Agent Skill | Intelligence-driven (Probabilistic) | High | Multi-step tasks requiring expert judgment. |

5. Design Principles for Resilience and Memory

Building production-grade agents requires solving the “Context Window Exhaustion” and “Hallucination” problems through sophisticated memory management.

Technical Memory Hierarchy

- Context/Short-Term Memory: Managed via Attention Sinks and the H2O (Heavy Hitter Oracle) mechanism. H2O ensures that even as the system selectively “forgets” less relevant tokens to save memory, it retains “heavy hitter” tokens essential for maintaining conversational coherence and factual accuracy.

- Long-Term/Weight Memory: Established during pre-training/fine-tuning; provides the agent’s foundational world-view.

- Human-Readable Memory: Following the Cbot architecture, memory must be organized into hierarchical Markdown files (Short-term/Long-term task logs). This allows for system transparency and manual pruning of “bad memories.”

Implementation Standards for Architects

- Rule of Context Isolation: Utilize metadata tags for discovery; never pre-load full instruction sets or

skill.mdfiles into the global context. - Rule of Fail-over Cognition: If an Execution Script returns a

stderror null value, the agent must be programmed to invoke theskill.mdlogic to analyze the error and dynamically re-route via an alternative tool. - Rule of Auditable State: All persistent updates to “long-term” agent memory must be written to human-readable Markdown logs, ensuring the agent’s “learning” is auditable and non-hallucinatory.

This framework transforms general-purpose LLMs into specialized experts, moving beyond simple text generation into the realm of intelligence-driven execution paths.