Architecture Decision Report RAG vs. Long Context Strategies for LLM Integration

📎 附件资料

- 在线预览(幻灯片模式):点击打开

- 下载:/assets/rag_vs_long_context.pdf

🖥️ 幻灯片预览(支持全屏)

1. The Challenge of Context Injection in Static Models

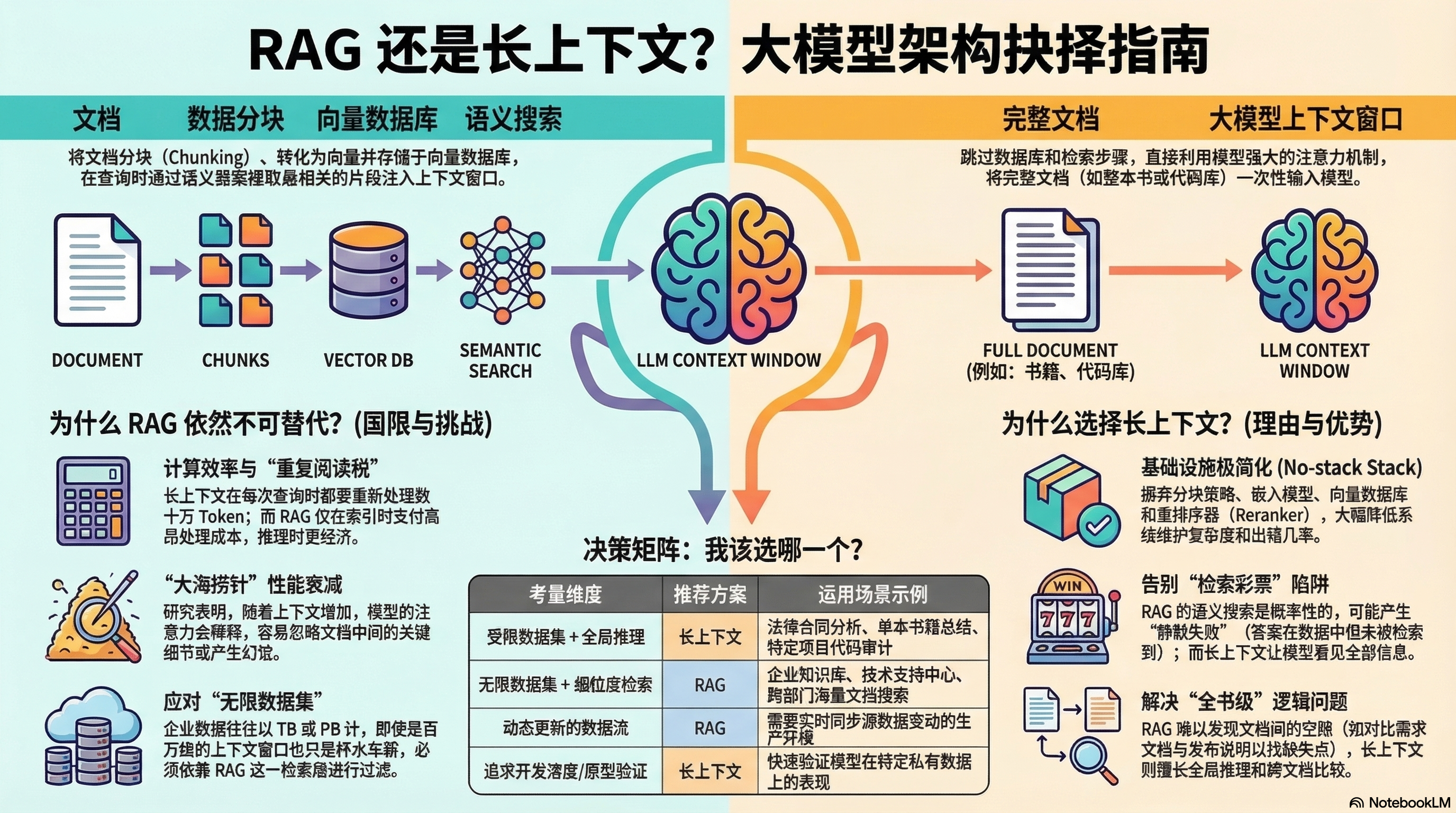

A fundamental architectural constraint of Large Language Models (LLMs) is that they are “frozen in time.” While these models possess a vast compression of human knowledge up to their training cutoff, they lack awareness of real-time events and, more critically, have no access to proprietary enterprise data—internal wikis, private codebases, or sensitive documentation. To make these models operationally relevant, we must solve the problem of context injection: the strategic delivery of the right data to the model at the precise moment of inference.

Current AI infrastructure offers two primary architectural responses to this limitation. The first is Retrieval-Augmented Generation (RAG), an engineering-intensive approach that filters massive datasets into actionable context before the LLM processes it. The second is the emerging Long Context paradigm, a “brute force” model-native solution that leverages massive jumps in token capacity to ingest data directly. Selecting the correct path requires a deep understanding of the trade-offs between complex retrieval pipelines and high-capacity attention mechanisms.

2. Retrieval-Augmented Generation (RAG): The Engineering-Centric Approach

RAG serves as a high-precision tool designed to navigate the “infinite data set.” Rather than overwhelming the model’s attention mechanism with noise, RAG acts as a sophisticated filter that provides only the most relevant signal for a given query.

The RAG Pipeline Infrastructure

Implementing a production-grade RAG system requires a multi-layered stack designed to manage data lifecycle and retrieval precision:

- Data Chunking Strategies: Documentation is decomposed using strategies such as fixed-size, sliding window, or recursive chunking to ensure semantic units remain coherent and consumable.

- Embedding Models: These transform text chunks into high-dimensional embeddings in a latent space, allowing the system to represent semantic relationships numerically.

- Vector Databases: A dedicated storage layer for indexing and querying high-dimensional vectors, enabling fast semantic similarity searches across millions of documents.

- Rerankers: A critical optimization layer that reruns search results through a secondary model to mitigate the precision-recall trade-offs inherent in initial vector retrieval, ensuring the most pertinent context is prioritized.

The “Retrieval Lottery” and Silent Failure

Despite its engineering rigor, RAG is susceptible to the “Retrieval Lottery.” Because semantic search is probabilistic, there is a recurring risk of silent failure: a scenario where the required information exists within the data lake, but the retrieval logic fails to surface the correct chunks. In these instances, the LLM never “sees” the data, leading to incomplete or hallucinated responses that are difficult to debug in automated pipelines. While RAG effectively minimizes the model’s processing load, the infrastructure overhead of maintaining and syncing this stack is substantial.

3. Long Context Windows: The “No-Stack” Paradigm

The “brute force” approach to context injection has recently become a viable competitor to RAG due to the expansion of context windows from the standard 4K tokens of early LLMs to 1M+ tokens in modern frontier models. To visualize this scale, a million tokens represents approximately 700,000 words—enough to fit the entire Lord of the Rings trilogy and The Hobbit into a single prompt with room to spare.

The “No-Stack” Stack

The primary architectural advantage of Long Context is its simplicity. By skipping embedding models, vector databases, and complex synchronization logic, the architecture collapses into a “no-stack” paradigm. The model’s native attention mechanism takes over the heavy lifting, scanning the entire ingested dataset to identify relevant patterns. This eliminates the maintenance burden of the retrieval layer and places the burden of reasoning directly on the model.

Solving the “Whole Book Problem”

Long Context is uniquely capable of addressing the “Whole Book Problem,” where RAG’s snippet-based approach often fails. Consider a comparison between Product Requirements and Release Notes to determine which security requirements were omitted from a final release.

- RAG’s Limitation: A vector search for “omitted security requirements” will retrieve snippets discussing security and requirements from both documents but cannot retrieve the absence of information. RAG shows the model isolated snapshots, preventing it from seeing the “gap” between the two texts.

- Long Context’s Advantage: By ingesting both documents in their entirety, the model can perform global reasoning over the full text to identify omissions that snippet-based retrieval is mathematically incapable of detecting.

4. Technical Trade-off Analysis: Efficiency, Precision, and Scale

The choice between RAG and Long Context is not binary; it depends on data volatility, computational budget, and the required depth of reasoning.

| Dimension | Retrieval-Augmented Generation (RAG) | Long Context Windows |

|---|---|---|

| Infrastructure Complexity | Heavy stack (DBs, Embeddings, Rerankers, Syncing) | Minimal (Native model injection) |

| Compute Efficiency | “Pay once” indexing cost; efficient per-query | “Re-reading tax” paid on every query |

| Data Volatility/Freshness | Efficient for static or slowly changing data | High cost for frequently updated dynamic data |

| Information Density | High (RAG acts as noise-reduction) | Risk of attention dilution (Needle in a Haystack) |

Efficiency, Dilution, and The Infinite Data Set

A primary drawback of Long Context is the “re-reading tax.” Processing a 500-page manual (approx. 250,000 tokens) requires the model to compute attention across the entire volume for every query. However, architectural advancements like prompt caching now bridge this gap for static data, allowing the system to cache the KV (Key-Value) states of large documents and effectively “pay once” for ingestion, similar to RAG indexing.

Conversely, we must account for the “Needle in a Haystack” problem. As context windows scale toward 500,000 tokens or more, the model’s attention mechanism can become diluted, leading it to fail at retrieving specific details or hallucinating based on surrounding text. In this regard, RAG is a noise-reduction architecture; by presenting only the top relevant chunks, it forces the model to focus on the signal, not the noise.

Finally, we must acknowledge the Infinite Data Set constraint. Enterprise data lakes often reach terabytes or petabytes. At this scale, even a million-token context window is a “drop in the bucket.” For true enterprise-wide knowledge management, a retrieval layer remains a functional necessity to filter petabytes of data down to a size the LLM can ingest. The following section provides a framework for selecting the appropriate strategy based on these constraints.

5. Strategic Selection Framework for Technical Leadership

To maximize the ROI of LLM integration, architects must match the injection strategy to the specific data volume and reasoning complexity of the use case.

Scenario A: Bounded Datasets & Global Reasoning

For tasks involving a specific, finite set of documents—such as deep analysis of a legal contract, summarizing a single book, or performing gap analysis between two reports—Long Context is the optimal choice. It eliminates the risk of silent failure, reduces infrastructure complexity, and leverages the model’s native ability to understand the “entire haystack” and identify what is missing.

Scenario B: Infinite Enterprise Knowledge

For broad-scale applications like corporate wikis, customer support knowledge bases, or massive codebase repositories, the Vector Database (RAG) remains the only viable warehouse. It serves as an essential gatekeeper, ensuring that the LLM is not overwhelmed by noise and that the computational costs of processing millions of documents remain sustainable.

In conclusion, the optimal strategy depends on the objective: if the goal is to find a specific “needle” in a vast, sprawling archive, RAG is the standard. If the goal is to understand the “whole book” and perform comprehensive reasoning over its contents, Long Context is the superior path. Most robust enterprise architectures will eventually converge on a hybrid approach—using RAG for initial filtration followed by Long Context for high-fidelity reasoning.