Technical Implementation Plan Enterprise-Scale LLM Adaptation via Low-Rank Adaptation (LoRA)

📎 附件资料

- 在线预览(幻灯片模式):点击打开

- 下载:/assets/lora_mastery.pdf

🖥️ 幻灯片预览(支持全屏)

1. Strategic Overview of Parameter-Efficient Fine-Tuning (PEFT)

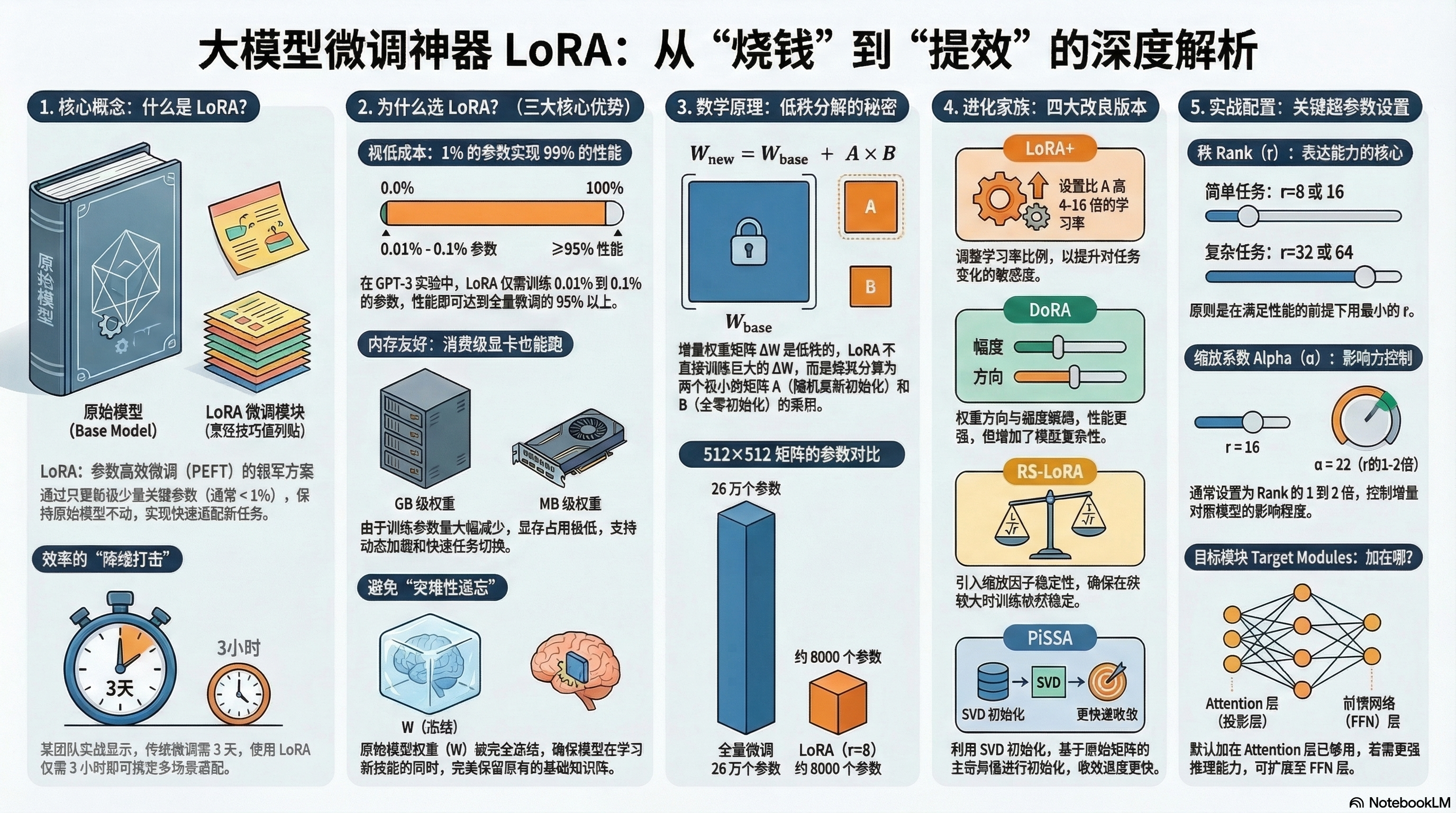

In the current landscape of enterprise AI, the deployment of Large Language Models (LLMs) has reached a critical inflection point. Traditional full-parameter fine-tuning, which modifies every weight in a multi-billion parameter model, is increasingly unsustainable. It presents a prohibitive “resource tax”—high VRAM costs, long training cycles, and the risk of “catastrophic forgetting.” To maintain competitive speed-to-market, organizations must pivot toward Parameter-Efficient Fine-Tuning (PEFT), specifically Low-Rank Adaptation (LoRA). This strategy resolves the tension between high-performance adaptation and computational constraints, allowing us to deliver specialized capabilities without the overhead of massive hardware clusters.

The strategic differentiator of LoRA is its shift from “rebuilding the library” to “precision patching.” Imagine the base LLM as a 10,000-page encyclopedia. Full fine-tuning is equivalent to rewriting the entire encyclopedia just to add a single chemistry recipe or a specific “seasoning” tip to the cooking section. It is inefficient and risks altering the foundational knowledge. LoRA, by contrast, leaves the original “encyclopedia” untouched and simply adds “sticky notes” to the margins. These notes contain only the delta—the specific task knowledge required. This “down-dimensional strike” on engineering inefficiency transforms project timelines, moving deployment from three days of full-model retraining to three hours of adapter training.

| Dimension | Full Fine-Tuning | LoRA (PEFT) |

|---|---|---|

| Computational Cost | Extremely High (VRAM intensive) | Very Low (Consumer GPUs viable) |

| Storage Requirements | Gigabytes per task (Full Model) | Megabytes per task (Adapter Only) |

| Risk of Catastrophic Forgetting | High (Original weights overwritten) | Low (Base weights are frozen) |

| Deployment Flexibility | Low (One model per task) | High (One base + multiple plugins) |

This efficiency is not a compromise but a mathematical realization: when models learn new tasks, the necessary updates do not require the full dimensionality of the original model.

-——————————————————————————-

2. Theoretical Architecture: The Low-Rank Mechanism

The mathematical intuition behind LoRA is rooted in the “Low-Rank Hypothesis.” While a model’s weight matrix (W) exists in a high-dimensional space, researchers have found that the information density is often concentrated. Using Singular Value Decomposition (SVD), we can see that many rows or columns in these matrices are linearly dependent. In practice, task-specific updates (\Delta W) actually reside in a much lower intrinsic dimension than the original model. We only need to capture the top 10–20 singular values—the “key directions”—to teach the model a new “accent” or specialized domain.

To operationalize this, LoRA decomposes the weight update \Delta W into two smaller, low-rank matrices, A and B. For a weight matrix W of size d \times k, we represent the change as the product of A (size d \times r) and B (size r \times k), where the rank r is significantly smaller than d or k.

The modified weight matrix W’ is expressed as: W’ = W + \Delta W = W + AB

Initialization Protocol: Matrix A is initialized with Gaussian noise to allow for exploration, while Matrix B is initialized to zero. This ensures that at Step 0, AB = 0. By starting with a “blank slate” sticky note, we protect the model’s “mother tongue”—its foundational logic—ensuring no disruption to baseline performance before the first training steps begin. By freezing the backbone weights (W), we focus 100% of the optimization effort on these thin, efficient matrices.

-——————————————————————————-

3. Engineering Advantages: Resource Efficiency and Model Integrity

In a production environment, LoRA’s “frozen base” architecture is transformative. It allows a single foundational model to remain stable and immutable while serving as the anchor for a modular AI ecosystem.

Beyond the theoretical elegance, the engineering benchmarks are compelling:

- GPT-3 Evidence: With a rank r=8, LoRA requires updating only 0.01% to 0.1% of total parameters, yet it maintains 95%–99% of the performance seen in full fine-tuning.

- RoBERTa Evidence: At r=16, LoRA achieved scores within 0.5 to 1 point of full fine-tuning while requiring a fraction of the compute.

The Three Key Advantages of LoRA:

- Cost/Parameter Efficiency: Reducing tunable parameters from billions to millions allows for training on consumer-grade hardware, democratizing the ability to build custom models.

- Speed/Deployment: Adapters are measured in Megabytes (MB) rather than Gigabytes (GB). This allows for “hot-swapping” adapters in seconds, enabling a single inference server to switch between multiple specialized roles (e.g., Legal Analysis to Creative Writing) dynamically.

- Modular Design: Because the “Mother Tongue” (base weights) is never touched, the model is immune to catastrophic forgetting. It retains general intelligence while successfully layering on a “Task Accent.”

-——————————————————————————-

4. Implementation Workflow: The LoRA Training Cycle

The LoRA pipeline is a precision-strike optimization. Rather than the brute force of updating the entire weight manifold, the workflow is as follows:

- Parameter Initialization: Freeze W. Initialize A (Gaussian) and B (Zero).

- Forward Propagation: For input X, the output is calculated as Y = (W + AB)X. The base model and the adapter contribute to the final result simultaneously.

- Gradient Calculation: This is the primary source of memory savings. Gradients are calculated only for matrices A and B. Because we do not calculate or store gradients for the massive W matrix, we can train large models on GPUs that would otherwise crash during full fine-tuning.

- Optimizer-driven Updates: Parameters of A and B are updated (via Adam/AdamW) to minimize the loss.

This “lazy” update strategy ensures that we are only refining the necessary task-specific vectors, keeping the computational footprint minimal.

-——————————————————————————-

5. Comparative Analysis of LoRA Variants

As the ecosystem has matured, several specialized variants have emerged to solve stability and convergence issues.

- LoRA+: Addresses the sensitivity of output layers by applying a higher learning rate (typically 4x–16x) to Matrix B compared to Matrix A, accelerating adaptation in deeper layers.

- DoRA (Weight-Decomposed Low-Rank Adaptation): Decouples weights into Magnitude (M) and Direction (V). By tuning direction via LoRA and training magnitude separately, it bridges the performance gap with full fine-tuning, though at the cost of higher complexity.

- RS-LoRA (Rank-Stabilized LoRA): To ensure stability when scaling to high ranks, this variant uses a scaling factor of \frac{\alpha}{\sqrt{r}} instead of the standard \frac{\alpha}{r}, preventing gradient explosions in complex tasks.

- PiSSA: Uses SVD to initialize A and B with the principal singular values of the original weights. This significantly accelerates convergence but carries a “Principal’s Tax”—a higher initial computational cost to perform the SVD.

Decision Matrix:

- Priority: Cost Efficiency & Simplicity \to Standard LoRA or LoRA+.

- Priority: Maximum Performance & Reasoning \to DoRA or RS-LoRA.

-——————————————————————————-

6. Practical Configuration: Hyperparameter Optimization

The key to a successful LoRA implementation is “Goldilocks” tuning—providing enough capacity for the “accent” without overfitting.

Engineering Best Practices:

- Rank (R):

- Simple Tasks (Classification/Extraction): R = 8 or 16.

- Medium Tasks (Standard Chat/Summarization): R = 32.

- Complex Tasks (Reasoning/Code/Math): R = 64.

- Alpha (****\alpha**):** This acts as the scaling factor for the adapter’s influence. The standard rule of thumb is to set \alpha at 1x to 2x the value of R (e.g., R=32, \alpha=64).

- Optimization Protocol (Target Modules):

- Baseline: Apply to Attention projection layers (default).

- Escalation: If accuracy is insufficient, expand to Feed-Forward Networks (FFN). This captures more complex patterns but increases the parameter count.

-——————————————————————————-

7. Conclusion: The Future of Modular LLM Deployment

The transition to LoRA marks a fundamental paradigm shift from monolithic, rigid AI to a modular, adapter-based ecosystem. By mastering this “down-dimensional strike” on engineering inefficiency, enterprises can transform their operational velocity—compressing a three-day training bottleneck into a three-hour iteration cycle.

The success of our modular deployment rests on the Three Pillars of LoRA:

- Balance: Right-sizing the rank to capture task complexity.

- Efficiency: Reducing the storage footprint from GBs to MBs to enable scaling.

- Retention: Protecting the “Mother Tongue” of the base model while precisely layering on the “Task Accent.”

This architectural approach allows an enterprise to maintain dozens of specialized AI agents while only paying the VRAM cost of a single base model, ensuring that our AI strategy is both high-performing and economically sustainable.