Technical Architecture Proposal Building Evolving AI Agents via Document-Driven Memory Systems

📎 附件资料

- 在线预览(幻灯片模式):点击打开

- 下载:/assets/the_openclaw_paradox.pdf

🖥️ 幻灯片预览(支持全屏)

1. Executive Summary: The Shift from Chatbots to Persistent Agents

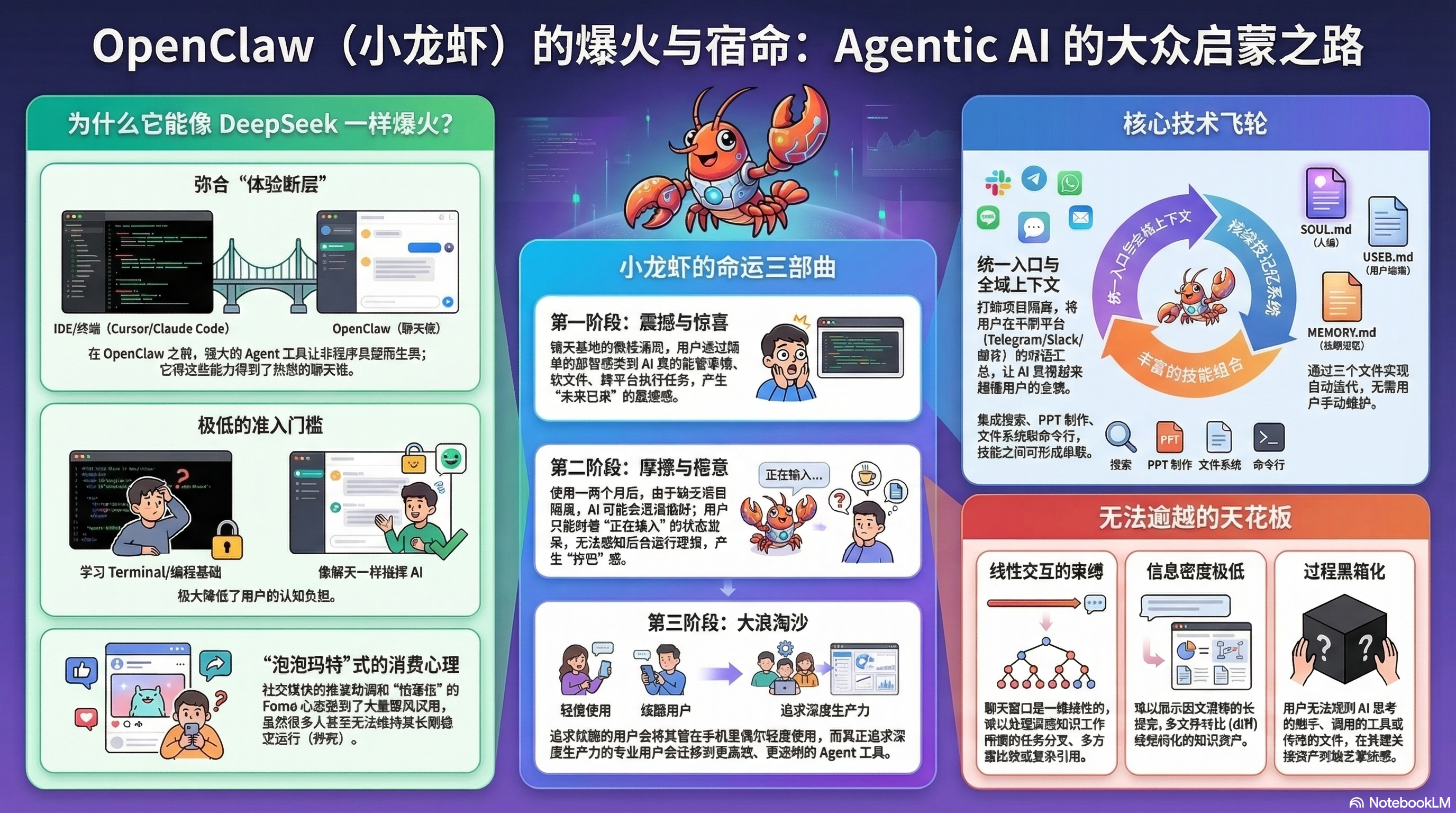

The current AI landscape is undergoing a decisive strategic shift from “Open-Loop” chat interfaces to “Closed-Loop” Agentic AI. While standard LLM interfaces like ChatGPT offer high-quality dialogue, they remain fundamentally reactive, requiring constant user intervention to maintain momentum. The transition to professional productivity tools necessitates persistent state management—a bridge that allows AI to move beyond ephemeral conversations and toward autonomous task execution.

However, as early implementations like OpenClaw have demonstrated, a “Closed-Loop” system is only as effective as its memory architecture. Standard systems often suffer from “token saturation,” where the context window becomes cluttered with raw interaction history, diluting the model’s reasoning capabilities. This proposal outlines a “Post-OpenClaw” implementation strategy: a document-driven memory system that avoids the “ceiling” of linear chat interfaces and ensures long-term system intelligence. By prioritizing structured knowledge over raw token accumulation, we create agents that don’t just remember; they evolve.

2. The Triad Architecture: SOUL, USER, and MEMORY

A robust Agentic architecture requires the strategic segregation of identity, user preferences, and factual data. This modularity is not merely a technical preference; it enables “Persona Portability.” By decoupling the agent’s “Silicon Soul” from the underlying platform, we can “clone” a highly specialized agent simply by migrating its core Markdown files to a new environment. This approach ensures that whether the user interacts via Slack, a Terminal, or an IDE, the agent maintains a unified context.

The Core Triad Breakdown

| Component | File Name | Role & Strategic Importance |

|---|---|---|

| Persona Definition | SOUL.md |

Defines the agent’s core identity, cognitive biases, and communication style. It ensures a consistent “work partner” experience across sessions. |

| User Profiling | USER.md |

Captures the evolving image of the user, including specific project roles, preferred technical stacks, and recurring communication nuances. |

| Long-term Knowledge | MEMORY.md |

A curated repository of high-value facts, technical requirements, and project-specific context extracted from past interactions. |

Translating “Soul” Traits to Agent Output Style

The SOUL.md file serves as the behavioral blueprint. By modifying these traits, we can drastically pivot the agent’s utility for different business units.

| Soul Trait | Impact on Agent Output Style |

|---|---|

| Directness | Forces the agent to bypass conversational pleasantries in favor of immediate code/data output. |

| Technical Depth | Mandates the inclusion of architectural trade-offs and edge-case analysis in all technical responses. |

| Inquisitiveness | Instructs the agent to proactively question ambiguous requirements before proceeding with task execution. |

This triad interaction ensures that the agent becomes increasingly “opinionated” and efficient, drawing from the same persistent files to understand that a project update requested on Slack must align with the technical documentation currently open in the user’s IDE.

3. The Self-Evolution Loop: Extraction, Review, and Pruning

To remain effective in high-velocity business environments, an agent must act as its own librarian. Without “Autonomous Maintenance,” agents inevitably face system decay. As an architect, I view this loop not as an optional feature, but as a mandatory guardrail against the entropy of unstructured data.

The Self-Maintenance Pipeline

The agent must be programmed to execute the following internal commands at regular intervals:

- Log Review: Analyze raw interaction logs from all platforms (Slack, Email, Terminal) to identify recurring themes and high-value data points.

- Information Distillation: Synthesize raw logs into structured insights, updating the

.mdtriad files. For example, if a user repeatedly requests Python for data tasks, theUSER.mdprofile is updated to reflect this preference. - Conflict Resolution & Pruning: Identify and delete outdated project specs or resolve contradictions between legacy data and recent updates.

The “So What?” Analysis: Why is this loop critical? Because it optimizes the “Signal-to-Noise Ratio.” By moving data from cluttered interaction logs into lean, structured Markdown files, we prevent the context window from becoming a graveyard of irrelevant details. This distillation is the primary defense against “hallucination,” as it ensures the model’s limited computational attention is focused only on current, verified facts.

4. The Flywheel Effect: Interoperability and Capability Compounding

When unified entry points (like Slack or Feishu) are combined with persistent memory, the system triggers a “Flywheel Effect”—a state where every interaction compounds the agent’s utility.

The Synergy of Memory and Extensible Skills

The true power of this architecture emerges when Persistent Memory interacts with Extensible Skills—such as File System diffs, Terminal execution, and API-driven search.

- Data Compounding: The agent acts as a centralized intelligence hub. It can ingest a project update from a mobile chat app, store it in

MEMORY.md, and then apply that specific context when the user asks it to generate a technical PPT or execute a code diff in the terminal hours later. The agent stops being a siloed tool and becomes a repository of institutional knowledge. - Skill Evolution: Beyond merely using tools, a sophisticated agent utilizes its memory to create tools. By leveraging its ability to generate and execute code, the agent can write its own specialized “skills” for a specific business task—such as a custom data-scraping script—and then “remember” how to invoke that tool in the future.

This compounding cycle ensures the agent becomes “smarter” (more precise) rather than “heavier” (slower), evolving its capabilities alongside the user’s career or project lifecycle.

5. Implementation Strategy and Guardrails

While chat-based entry points (like Telegram or Slack) lower the barrier to entry, they introduce a “Ceiling.” Chat is linear, whereas deep knowledge work—comparing file diffs or managing complex folder structures—is non-linear. A professional implementation must allow the agent to move between the low-friction chat window and high-fidelity environments like the IDE or Terminal.

Technical Guardrails to Prevent “Agentic Decay”

Without strict maintenance, agents often “die” or become “clunky” within 1–2 months. To prevent this, the following guardrails are required:

- Prevention of Context Bleeding: The system must implement project-specific pruning. Without it, the agent suffers from “Context Bleeding”—mistakenly applying Project A’s formatting preferences or architectural constraints to Project B. This is the leading cause of user frustration in long-term deployments.

- Human-in-the-Loop Observability: All memory updates must occur within human-readable Markdown files. This ensures that the agent’s “Silicon Soul” remains auditable. A user must be able to manually edit

MEMORY.mdto correct a misunderstood fact, preventing the agent from spiraling into incorrect self-assumptions. - Token Efficiency Modeling: We must continuously evaluate the cost-benefit of document updates vs. context window saturation. The architecture should prioritize offloading information to persistent storage to keep the “active reasoning” context lean and high-performance.

Documentation is no longer just for humans; it is the “Silicon Soul” of the AI. By building an architecture centered on structured, evolving memory, we move past the novelty of chat and into the era of the truly persistent AI partner.