Technical Architecture Design Unified Multimodal Vectorization via Gemini Embedding 2

📎 附件资料

- 在线预览(幻灯片模式):点击打开

- 下载:/assets/the_unified_multimodal_universe.pdf

🖥️ 幻灯片预览(支持全屏)

1. The Paradigm Shift in Multimodal Retrieval

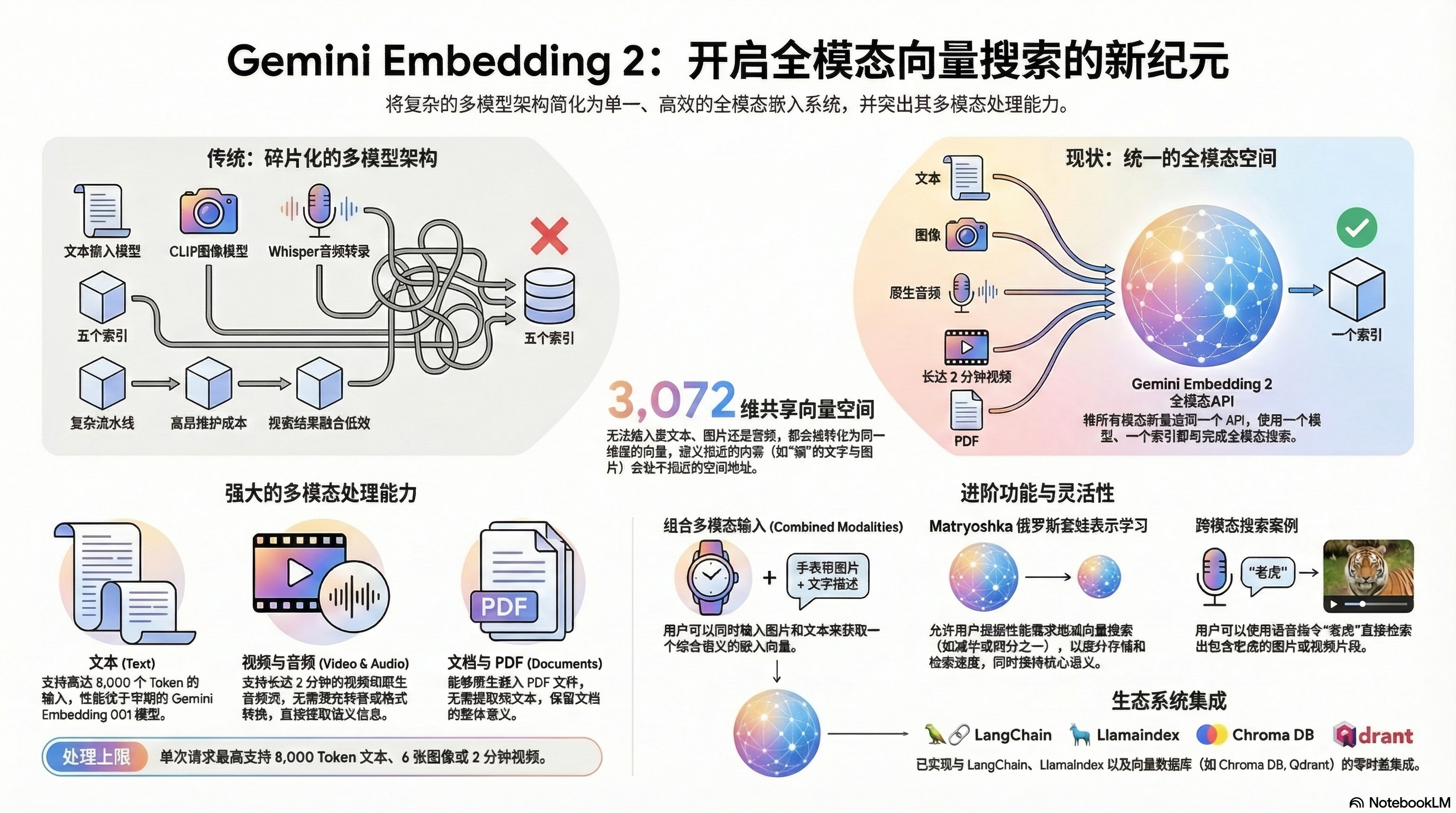

The current enterprise data landscape is characterized by a move away from fragmented, modality-specific pipelines toward unified vector spaces. For the Principal Architect, this shift is not merely a convenience but a strategic necessity for system agility. Historically, building a search system that spanned text, audio, and video required a fragile orchestration of heterogeneous models. The introduction of Gemini Embedding 2 marks a transition to a native multimodal architecture where disparate data types are mapped into a single mathematical environment. This unified vector space allows for seamless cross-modal discovery, significantly reducing the engineering overhead required to make enterprise data—from legal PDFs to customer voice recordings—accessible and actionable.

Leveraging the Google Gen AI SDK, Gemini Embedding 2 functions as a natively multimodal model, replacing the need for disparate specialized pipelines. By collapsing the processing of text, images, video, and audio into a single shared vector space, it offers a streamlined “One Model, One Index” value proposition. This architecture is supported on day-zero by key ecosystem players including LangChain, LlamaIndex, ChromaDB, and Qdrant, ensuring that this shift is immediately implementable within existing enterprise RAG (Retrieval-Augmented Generation) frameworks.

2. Critical Audit of Legacy “Multi-Model” Cascaded Architectures

Traditional “cascaded” architectures have become a primary bottleneck for enterprise scalability. In these legacy systems, developers were forced to stitch together a patchwork of models—such as CLIP for images, Whisper for audio transcription, and BERT-based models for text—each producing vectors in non-aligned spaces. This approach introduces several systemic headaches:

- Infrastructural Bloat: Systems required maintaining five or more distinct models and indexes. This creates significant technical debt and complicates versioning and lifecycle management.

- The Re-ranking/Fusion Challenge: Since different models reside in different vector spaces, results are not directly comparable. This necessitates a complex re-ranking or “fusion” layer to reconcile hits from different modalities, which is notoriously “messy” and increases retrieval latency.

- Preprocessing Latency: Heavy preprocessing is the status quo. Audio must be transcribed before embedding, and PDFs are often stripped of their visual context to be processed as plain text, destroying valuable semantic metadata.

| Metric | Legacy Cascaded Pipelines | Unified Gemini Embedding 2 Pipeline |

|---|---|---|

| Model Management | 5+ Models (CLIP, SigLIP, Whisper, etc.) | Single Native Multimodal Model |

| Preprocessing | Transcription & Text Extraction Required | Native Processing (No Conversion) |

| Hardware/Compute | Heterogeneous (CPU for Whisper, GPU for CLIP) | Unified API-Driven (Serverless) |

| Index Management | Multiple Disparate Indexes | Single Unified Index |

| Retrieval Logic | Complex Reranking & Fusion Layers | Direct Vector Similarity Search |

| Maintenance | High (High Op-Ex, complex orchestration) | Low (Simplified SDK & Infrastructure) |

By collapsing these disparate workflows, architects can eliminate the friction of cross-model synchronization and move toward a more deterministic retrieval path.

3. Core Technical Capabilities of Gemini Embedding 2

Gemini Embedding 2 is a native multimodal processor, meaning it extracts semantic features directly from the raw data without intermediate translation. This “No Conversion” advantage is critical for maintaining the integrity of the semantic signal.

- Video: Supports clips up to 2 minutes natively. It can identify specific visual cues, such as a “soccer team in yellow uniforms,” without frame-by-frame text tagging.

- Audio: Native embedding without transcription. It can capture the semantic essence of speech—for example, a recording of the word “tiger” can directly retrieve video or image assets of tigers.

- Documents (PDFs): Processes PDFs natively, maintaining the spatial relationship between text and diagrams that is often lost in OCR-based text extraction.

- Text & Images: Robust support for up to 8,000 tokens and 6 images simultaneously in a single content object.

The model utilizes a 3,072-dimensional vector space. These high-dimensional properties allow for granular semantic “addressing.” In a million-item index, the system can distinguish between subtle visual and auditory differences, such as the specific markings on a black-and-white cat’s face, ensuring that similarity lookups are highly precise across modalities.

4. Architectural Optimization: Eliminating Systemic Complexity

Moving to a “One Model, One Index, One Query” workflow fundamentally redefines the technical stack by replacing heterogeneous infrastructure with a unified API-driven architecture.

The strategic impact of the single API call is the elimination of the cross-modal fusion layer. Historically, the ROI of multimodal systems was hampered by the need for expensive CPU-heavy transcription (Whisper) alongside GPU-heavy image embedding (CLIP). Gemini Embedding 2 collapses this into a serverless API call, removing the need for specialized embedding servers and reducing maintenance hours. Because the model performs semantic alignment internally, a text query for “peaceful nature sounds” can return the highest-scoring audio file directly based on vector similarity, without any intermediate transcription or re-ranking logic. This reduces system latency and significantly lowers the barrier to entry for high-performance multimodal search.

5. Advanced Implementation Strategies: Matryoshka & Aggregated Embeddings

Enterprise-grade systems require a balance between retrieval precision and storage costs. Gemini Embedding 2 provides two key mechanisms for this optimization:

Matryoshka Representation Learning This feature allows for “nested” embeddings where a single 3,072-dimensional vector can be truncated to 1/2 (1,536) or 1/4 (768) of its size.

- Trade-off Evaluation: Architects should deploy 768-dimensional embeddings for high-speed, preliminary lookups or when storage costs are a primary constraint. The full 3,072-dimensional vector should be reserved for use cases requiring fine-grained semantic granularity (e.g., specific object colors).

- Systems Advantage: Because the dimensions are nested, developers can adjust the embedding size without re-indexing the entire dataset, a massive operational advantage during system tuning.

Multi-Part Content Aggregation vs. Separate Embeddings A critical distinction exists in how the SDK handles content:

- Aggregated Embeddings: By passing multiple “parts” (e.g., an image of a watch band + a text description of a watch face) within a single

contentobject, the model returns one “averaged” vector representing the combined semantic intent. This is ideal for complex queries where the user provides multi-modal input for a single search. - Separate Embeddings: Passing a list of distinct

contentobjects returns multiple vectors. This is the standard approach for indexing a library where each asset requires its own address in the vector space.

6. System Constraints and Design Considerations

As of the current release, the Gemini embedding 2 preview model requires specific architectural mitigations to ensure production stability:

- Temporal Chunking for Video: With a 2-minute native limit, longer content must be segmented. For a 10-hour lecture series, architects should implement 15-to-30-second temporal chunks. This allows the system to return precise timestamps for queries like “When did the lecturer show the circuit diagram?”

- Token and Image Management: While the limit is 8,000 tokens, semantic chunking is still recommended to avoid signal dilution. Similarly, the 6-image limit per request dictates how multi-page document embeddings should be batched.

- Production Warning: Being a

previewmodel, architects must account for potential rate limit adjustments and breaking changes. Design systems with an abstraction layer over the SDK to facilitate rapid updates as the model reaches general availability.

These constraints necessitate a modular ingestion pipeline that segments data into optimal sizes while maintaining their association within the unified index.

7. Strategic Conclusion: The Future of Multimodal Intelligence

The shift from fragmented search to a unified semantic understanding represents a milestone in AI systems architecture. Gemini Embedding 2 is not just a multimodal model; it is a quality-of-embedding upgrade, outperforming the original Gemini 001 model on text-to-text similarity and setting new benchmarks for cross-modality retrieval.

This architecture enables the transition from “unstructured file storage” to “integrated knowledge repositories.” A university, for instance, can now build a single RAG system where a student’s query retrieves a specific 15-second clip from a 30-hour video series, the corresponding slide in a PDF deck, and the relevant paragraph in a textbook—all through a single vector lookup. By improving embedding quality and collapsing systemic complexity, this unified approach unlocks product categories that were previously too technically expensive to realize.