White Paper Optimizing LLM Agent Efficiency with Anthropic’s Tool Calling 2.0 Framework

📎 附件资料

- 在线预览(幻灯片模式):点击打开

- 下载:/assets/tool_calling_20_redefined.pdf

🖥️ 幻灯片预览(支持全屏)

1. The Paradigm Shift: From JSON-Based Interaction to Programmatic Tool Calling

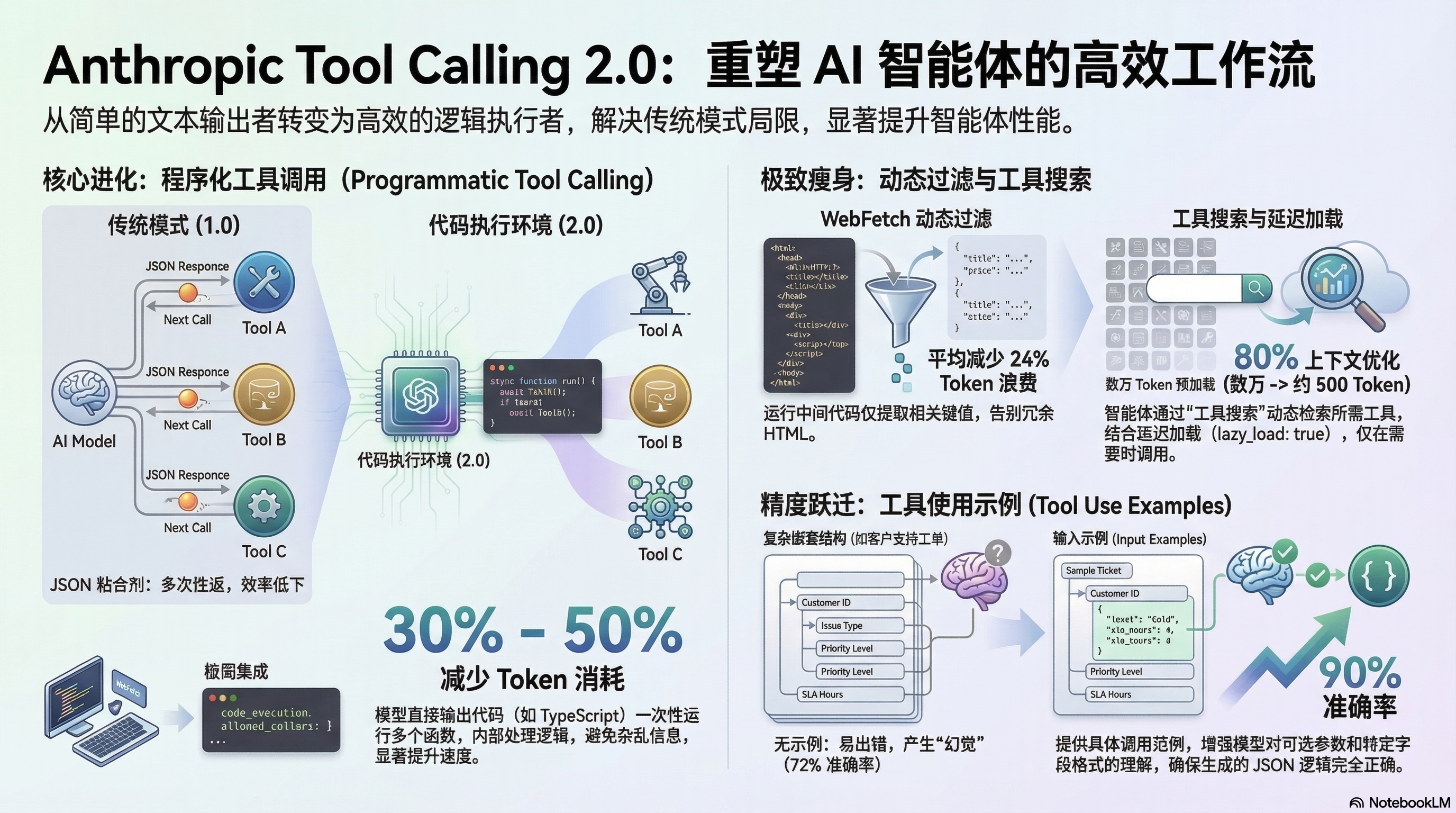

As Chief AI Solutions Architects, we are witnessing a fundamental transition in agentic design: the move from “Tool Calling 1.0”—characterized by static JSON outputs—to “Tool Calling 2.0,” a programmatic execution framework. For technical decision-makers, this evolution is the difference between a brittle prototype and a production-grade autonomous system. The traditional reliance on the model to act as a “glue” layer, manually translating intent into JSON schemas, is being superseded by environments where the model generates executable code to orchestrate its own toolchain.

The limitations of traditional tool calling are rooted in High-Latency Latency Cycles (Ping-Pong Interaction). In this legacy model, an agent must output a parameter, wait for a server response, ingest that response into its context, and repeat. This cycle is not only slow but computationally wasteful, often forcing the LLM to manually re-generate identical identifiers—such as database keys or email IDs—across multiple turns. Programmatic Tool Calling addresses these inefficiencies by treating the LLM as a developer rather than a data entry clerk.

“Asking an LLM to perform tasks solely through JSON-based tool calling is like asking William Shakespeare to write a play in Chinese after only a month of language classes. It might be possible, but it is far from his best or most natural work. LLMs are fundamentally more effective at writing code than they are at generating and reasoning through complex JSON schemas.”

By transitioning to an architecture where the model writes code (e.g., TypeScript or Python) to handle loops, conditional logic, and data passing between tools, we achieve a more deterministic execution flow. This architectural efficiency begins with minimizing the data footprint required for complex reasoning.

2. Mitigating Context Window Waste via Structural Optimization

In the current landscape, there is a significant delta between the “Theoretical Context Window” (often marketed at 1M+ tokens) and the “Effective Context Window,” which realistically sits between 128k and 200k tokens for complex reasoning tasks. To maintain performance, optimizing input content is a strategic necessity for cost management and attention preservation.

The Impact of Programmatic Tool Calling on Token Consumption

Programmatic execution allows for the localization of “messy” intermediate data. In a traditional workflow, the raw metadata of twenty emails might be pumped into the context window just to extract three IDs. With Tool Calling 2.0, this data remains inside the function environment. The code handles the iteration and filtering, returning only the final, relevant signal to the LLM. This architectural shift significantly improves the noise-to-signal ratio in the model’s attention mechanism, leading to a documented 30% to 50% reduction in token usage.

Dynamic Filtering (WebFetch) as a Specialized Efficiency Layer

A prime example of this optimization is Dynamic Filtering within tools like WebFetch (specifically version webfetch_20260209). Instead of dumping raw, noisy HTML into the context window, this layer executes an intermediate code step to filter for pertinent content before the data reaches the LLM.

Key Metric: Dynamic Filtering via WebFetch achieves a 24% reduction in token consumption by stripping non-essential HTML metadata before context ingestion.

Tool Search (MCP) for Scalability

As agentic tool libraries scale into the hundreds, loading every schema simultaneously becomes a bottleneck. The Model Context Protocol (MCP) “Tool Search” mechanism introduces a lazy-loading architecture.

| Strategy | Context Window Impact | Optimization Percentage |

|---|---|---|

| Standard Loading | High (Full schema library) | 0% (Baseline) |

| Tool Search + Lazy Loading | Minimal (~500 tokens for Search tool) | ~80% Improvement |

These efficiencies ensure the agent remains responsive and cost-effective, directly contributing to higher agentic accuracy by focusing the model’s “attention” on actionable data.

3. Enhancing Agentic Robustness through Precise Parameter Execution

In production workflows, “valid JSON” is a low bar; the true challenge is “correct usage.” Hallucinated parameters or malformed nested structures often break agents in complex support or technical scenarios.

Input Examples for Complex Tool Definitions

The “Input Examples” feature provides the model with a reference array of correct tool calls within the definition itself. This is critical for navigating nested structures—such as linking specific SLA hours to escalation tiers—where the relationship between optional parameters is not always intuitive.

Quantifying Accuracy Gains

The implementation of structured input examples yields a drastic improvement in execution reliability:

Pre-Optimization: 72% Accuracy vs. Post-Optimization: 90% Accuracy

This 18-percentage-point gain is the margin between an experimental tool and a reliable enterprise service.

MCP vs. CLI-Based Approaches

While some developers have migrated to Command-Line Interface (CLI) based tools to save tokens, the MCP approach is architecturally superior due to “Type Safety.” Because the LLM remains aware of the exact input schema and expected patterns, it maintains a level of execution precision that CLI-based methods, which lack structured schema awareness, cannot replicate.

4. Strategic Summary: Quantified Value for Production Environments

The adoption of Tool Calling 2.0 provides a clear path to reducing the Total Cost of Ownership (TCO) while enhancing the reliability of AI agents. By moving away from high-latency “ping-pong” interactions, organizations can deploy faster, leaner, and more capable agents.

Quantified Takeaways:

- Operational Speed: Dramatic reduction in round-trips via code-based loops and local conditional logic.

- Cost Efficiency: 30–50% overall token reduction through programmatic data localization.

- Scalability: 80% context optimization for large tool libraries via Tool Search and Lazy Loading.

- Reliability: 18-percentage-point increase in parameter accuracy via the Input Examples feature.

Implementation Directive

To capitalize on these gains, technical leadership must update agent runtimes to support the code_execution_20260120 tool. Crucially, developers must implement the allowed_callers parameter; this architectural logic explicitly designates the code execution tool as a valid caller of other tools, enabling the programmatic loop. Furthermore, all web-scraping dependencies should be migrated to webfetch_20260209 to enable dynamic filtering.

The future of autonomous agent architecture lies in the shift from text-based orchestration to a sophisticated, programmatic execution model that prioritizes context efficiency and execution precision.